AI and the Art of Storytelling: How Automation is Changing the Creative Process (and Everything Else)

Image by @steve_j via Unsplash

AI. Two little letters that seem to signal so much today. Artificial Intelligence (AI) burst onto the scene when ChatGPT was released by OpenAI in late 2022 (OpenAI), but generative AI has been studied and developed since the 1960s (Read a brief history here). Three years later, AI saturates our educational, professional, and personal lives. It offers an accessibility for people to use in their everyday lives and feels like chatting with another person due to its advanced LLM. But what is it, really? And, should we be worried about it?

In the first half of this two part series, we will explore the basics of generative AI, weigh some of its benefits and drawbacks, and grapple with a few of its complications.

The Basics

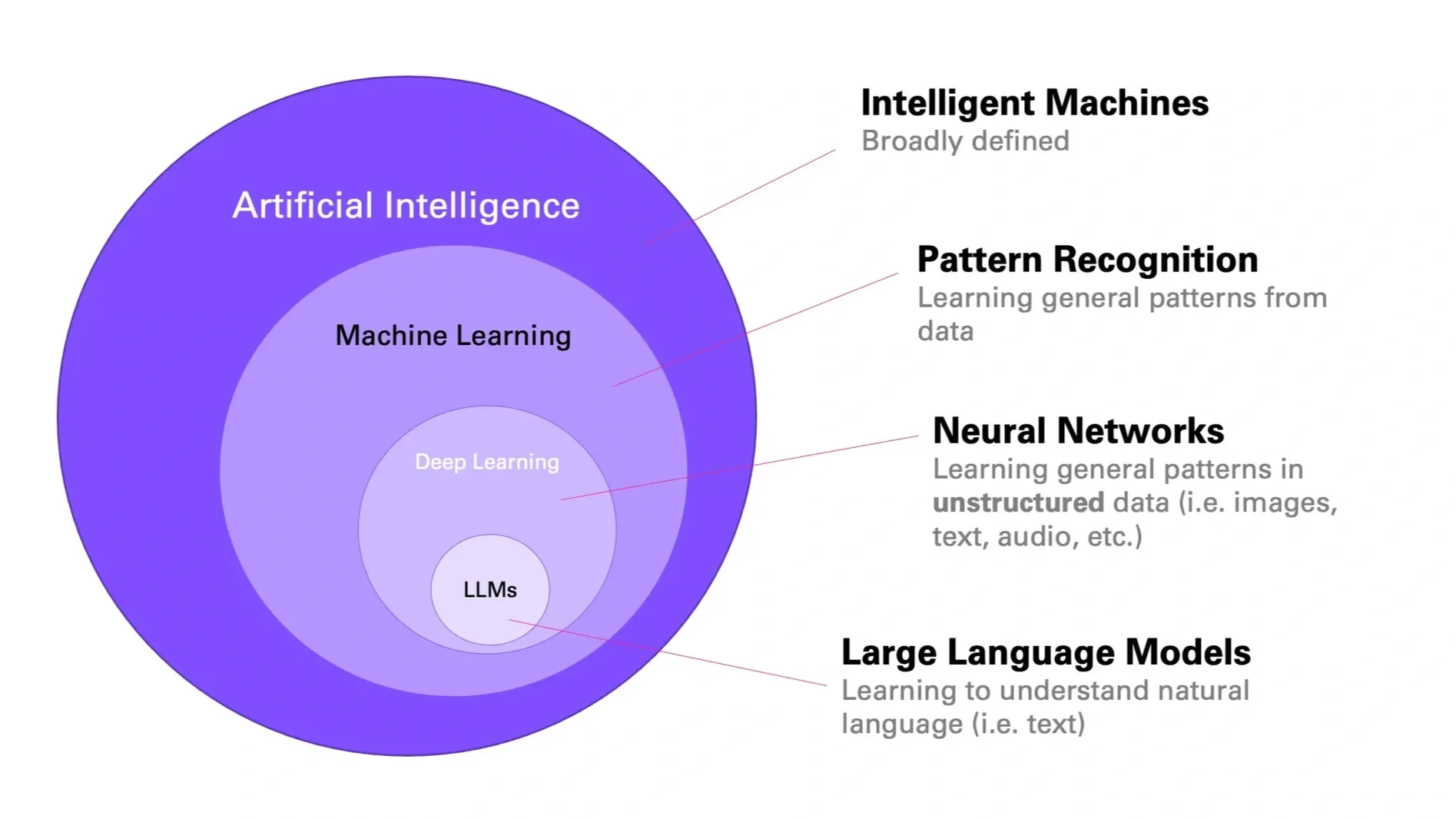

Artificial Intelligence is a broad term that encompasses things like facial recognition software, predictive text in your emails, virtual assistants like Alexa, Siri, and Google, and much more. Under the broad umbrella of AI is machine learning, or programs that gather data and learn to distinguish patterns as a means to respond/create. This image from the Medium article, How Large Language Models Work, by Andreas Stöffelbauer may be helpful:

This is where it gets more complex. Deep learning refers to the variety of output options for given inputs as well as the level of connection between inputs and outputs. In other words, deep learning allows for more accurate or “thoughtful” responses to be generated. Developers have created digital neural networks that are able to learn from unstructured data, similar to the human brain (though not as flexibly or deeply). Generative AI, another term you may have come across, fits here within deep learning. GenAI are intelligent machines that can create content including text, images, sounds, and more. ChatGPT by OpenAI may be the most well known example of GenAI, but others gaining traction include Google’s Gemini, Microsoft Copilot, and Meta AI.

Generative AI are made possible by, or are powered by, large language models (LLMs). An LLM is a very large neural network made up of a ton of data that allows for accurate text prediction. Each generation of text further trains the LLM for future responses, leading to what is experienced when putting a prompt into ChatGPT. While LLMs are named for their relation to text, newer versions are multimodal - they access and create media, not only text. The latest LLM that powers ChatGPT is GPT-5 (or Generative Pretrained Transformer 5) and can be accessed for $20 a month. The free version of ChatGPT is limited, though it might not feel that way for day-to-day use.

It is important to know that ChatGPT and other generative AI are being pre-trained with data from the internet, books, and other sources - both publicly available and sold/agreed upon data from various entities. Then, they are continually trained to more accurately and creatively generate text or other media based on prompts. This is how ChatGPT is able to summarize an article for you and also is able to answer inquiries (read more about how AI works here).

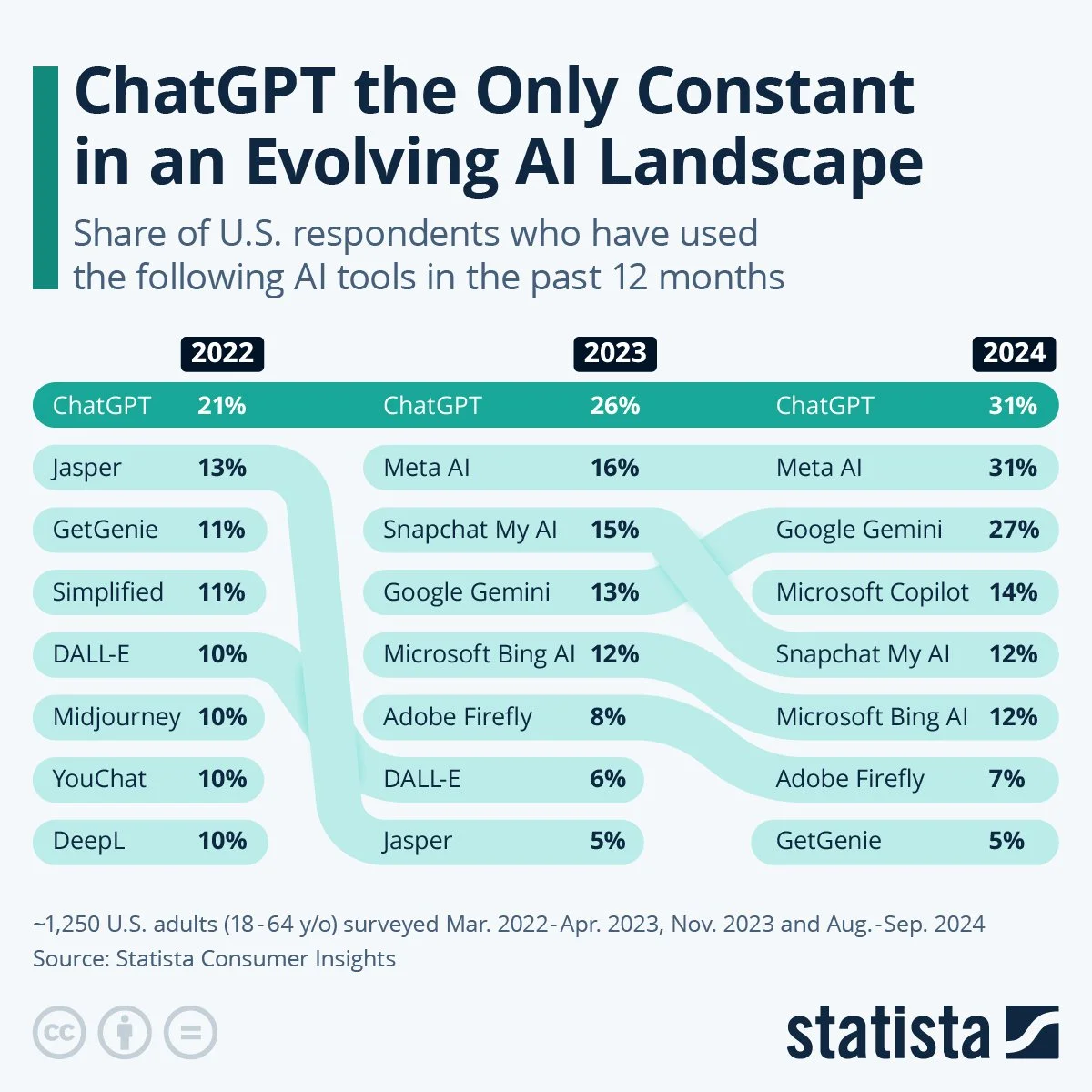

GenAI is not going away any time soon. ChatGPT broke records with its quick climb to one million users within 5 days (Exploding Topics) and as this chart shows, it continues to be a constant amid the use of other AI tools.

Benefits

As you might imagine, and might already know from your own experience, generative AI can be a useful tool for users in their everyday lives. Below are a few AI pros we think are worth highlighting.

Writing Process. GenAI tools can help you in planning, organizing, and writing. If you need to prepare a speech or presentation, write a brief, or draft a professional sounding email, ChatGPT can help with that. It will take your ideas and organize them into main points, offer information related to your desired topic, and suggest follow up questions for you to get more tailored results.

Reading and Research. Because ChatGPT is powered by such an expansive and multimodal LLM, it provides what seems like a wealth of knowledge at your finger tips. You can ask ChatGPT to find articles or other sources of information, summarize them, and utilize them in writing or other research-related tasks. This can aid in quick understanding of long documents or jargon-filled reports and can benefit learning.

Creativity. GenAI is creative and fun. It can support your brainstorming endeavors, whether silly or serious, and can adhere to tones and genres at your request. Some teachers like to use ChatGPT to help students brainstorm ideas. And, I’m sure we’ve all seen the unique artistry of GenAI.

Image generated by ChatGPT, OpenAI 2025

Drawbacks

Like all digital technologies, it’s important to stay educated on how new technologies work and impact our lives and world. Being aware of these things and cautious about our use of AI can help us develop what some might call critical digital literacy. Below, we highlight a few aspects of AI you might want to be aware of.

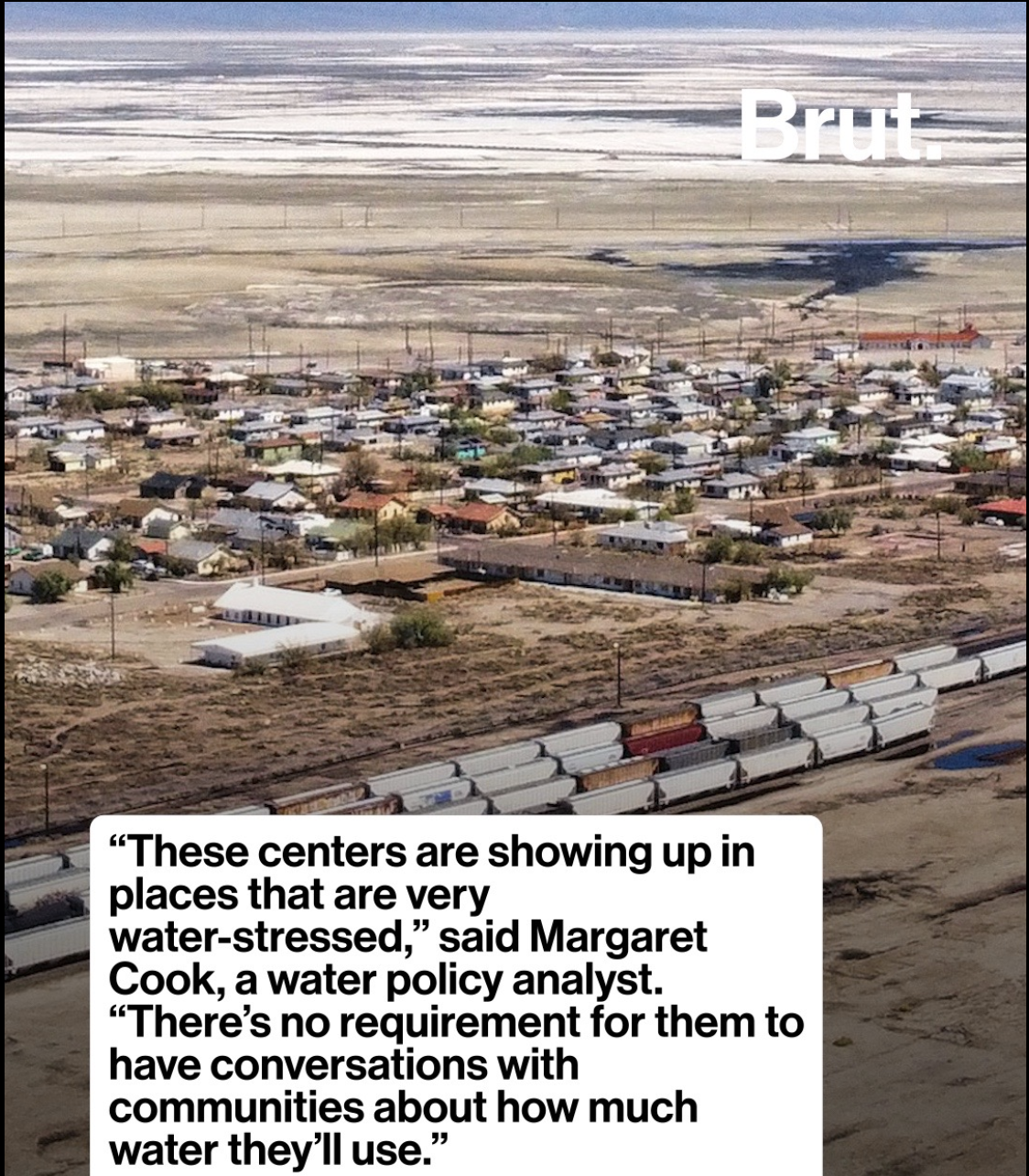

Environmental Impacts. The processes involved in creating, pretraining, and maintaining AI are virtual and physical. Similar to cloud storage, the hardware that powers AI needs a physical place to be housed, water to cool the system, and electrical energy to be trained and used (get more details in this MIT News article). Just in July of this year, Brutamerica reported that “AI data centers in Texas used 463 million gallons of water, as residents are told to take shorter showers” (Watch full video here). The physical impacts of AI are significant not only on their own, but in addition to other existing environmental issues.

Image from Brutamerica’s TikTok that shows one landscape in Texas where AI data centers are impacting the environment and the community.

Ownership and Consent. Another drawback to generative AI is the lack of ownership of ideas, text, and artwork. In academic circles, many of my colleagues worry about the lack of citation practices or consent to use information and media. While OpenAI is careful about their policy language, ChatGPT does utilize personal information that is publicly available, as well as sources such as publications that have been contracted out for pre-training generative AI. This brings into question who “owns” the rights to information, written works, art, and other content creation.

Missing or mis-represented information. One serious drawback of AI tools is the prevalence of misinformation and hallucinations (Dobrin 25-28). A hallucination for AI is when it shares incorrect data, information, or facts. This can show up as a source list with made up titles for articles that authors didn’t write, an inaccurate historical timeline, and other things that may not be noticeable if you don’t check over things. ChatGPT does have a small disclaimer encouraging users to check information as it can make mistakes. But many people do not take the time to review where the information that’s been given to them has come from or if it is accurately stated and/or cited. Additionally, LLMs are massive data sets, but they are limited by digital boundaries. Knowledges, histories, and voices of marginalized groups may not be included if they are not digitized in some way, resulting in gaps in information provided by AI. Even if included, they are often not foregrounded: “GenAI privileges specific kinds of information within a data set. This may be the result of a deliberate choice about which data to privilege; or it may simply be the result of some data occurring with disproportionate frequency in a data set.” (Dobrin 105).

Future Considerations

In many ways, AI integration into our daily lives is just getting started. Whether we use it at work, school, or home, or don’t use it at all, conversations continue to arise about it and more and more companies are integrating it into their technology. Lots of people are asking questions about the impact of AI on:

The workforce

Privacy

Critical thinking skills

Intellectual property and plagiarism

Ethics and authenticity

These topics get at the humanity tangled up in GenAI - how are humans impacted by AI? How do humans impact AI and its development and use? What does it mean to be human within our increasingly socio-technological world?

At Confianza Collective, we value human authenticity in all its complicated beauty. We also see positive uses for AI tools and continue to learn about generative AI as it evolves. Keep an eye out for part two of this blog series, in which we will grapple with some of these complexities and will offer some suggestions for organizations and storytellers to be smart users of AI.

Dr. Danielle Koepke is a content creator and consultant for social media strategy at Confianza Collective. She is also a teacher and researcher with expertise in digital storytelling, community health, and information literacy. To read more about Danielle’s work, check out her website.